Given its “Do No Evil” motto, Google’s decision to work with the world’s most robust military agency may seem surprising.

The tech giants have faced unprecedented scrutiny lately, with commissions, boards and committees around the world decrying the effect that they have had on society, from influencing elections to facilitating disinformation. But one area where their algorithms have the potential for huge impact has until recently flown mostly under the radar.

Google’s involvement in the Pentagon’s Algorithmic Warfare Cross-Functional Team, known as Project Maven, was leaked this week to Gizmodo from current Google employees. Google provided what are called Tensorflow APIs (application programming interfaces) which enable computers to communicate with each other.

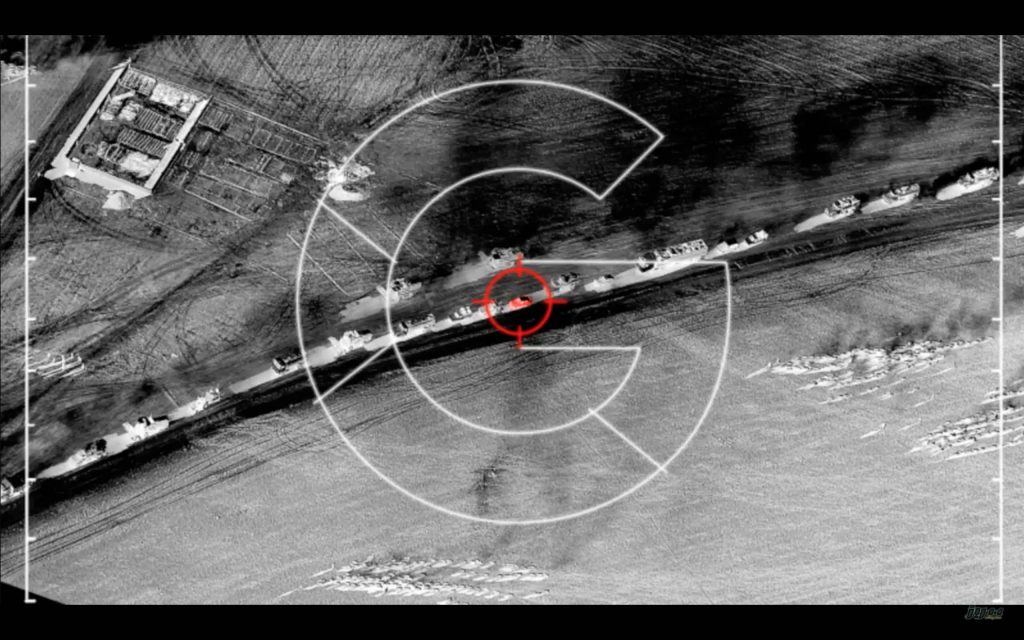

These systems enable the military to run through the hordes of footage collected by drones, identify an object of interest and then flag it for a trained human analyst to review. Google has stated that the partnership is a pilot and that its tools are solely for non-offensive use, though that seems difficult to prove. Reports indicate that it has already been used in operations against the Islamic State since December 2017.

From our partners:

While the news has generated controversy because it is rare for this new breed consumer tech companies to collaborate directly with the military, the armed forces haveh always played an important role in developing novel technologies, even for civilian capacities. The internet itself was of course the result of a research project at DARPA, which is part of the US department of defence, in order to give military personnel a way to communicate without needing a phone.

But times have changed, leaving govrnmental agencies scrambling to keep up. Ulrikke Franke, an expert on drones and the military at the University of Oxford, points out that, “cutting-edge research on new technologies is no longer done primarily in the military, in the US through DARPA, but at Google and the like.” This is for a variety of reasons, but primarily because the last few decades have seen an acceleration in technical innovation that a company free of bureaucratic restraints – and without national security issues to consider – can pursue and develop far better than a governmental agency.

“One of the key issues with UAVs is that they produce a lot more data than human beings can assess, given the resource constraints that militaries and intelligence agencies have to operate with,” says Dr.Jack McDonald, a lecturer in War Studies at King’s College London who specialises in the relationship between the ethics of war and new technology. “Artificial intelligence, or rather knowledge discovery by computer systems, could enable the same number of human beings to leverage these volumes of data.”

In 2017, the Pentagon invested $7.4bn directly into developing artificial intelligence and machine learning capacities. A memo from the Department of Defense, forming Project Maven, highlights that the integration of machine learning and artificial intelligence is crucial for the Department of Defense to maintain “advantages over increasingly capable adversaries and competitors”.

Given Google’s pioneering work in the field, as well as the links that in many of the executive board members of its parent company, Alphabet, have with the defense community; Google is technically in the perfect position to inform the development of this specific program.

Executives at both Google and at Google’s artificial intelligence arm, DeepMind, were among the first to speak about setting up ethical advisory boards to investigate the social implications of artificial intelligence when it is used in society. These are meant to stave off the danger of algorithms wreaking havoc on society with little impunity.

Within the US in particular, there is a long history of private companies, such as Lockheed Martin, carrying out contracted work for military agencies. Government contracts are usually lucrative and long term, and arguably provide an opportunity for a company like Google to have a greater role in developing artificial intelligence for military use independent of other companies, which would be a sufficient incentive.

It could be argued that tech companies will inevitably have to find a place in the military ecosystem, given how many of the new kinds of battles being waged are digital. As Dr.McDonald points out, “computer systems and technologies associated with AI are fundamentally dual-use – they could easily be repurposed for military use.”

Others argue that if Google is able to refine the surveillance tools that the military will inevitably use, then it has a moral responsibility to be involved, given that it can’t fundamentally alter the course of US foreign policy, but can reduce the number of unnecessary casualties.

And yet the Google employees leaked the internal emails to Gizmodo because they were outraged that their work would be used for surveillance technologies. For a company with “Do No Evil” as one of its mottos, Google’s decision to work with the world’s most robust military agency should be surprising, even to the people who work there.

Google’s previous track record also shows a reluctance to be involved with military projects, even indireclty. When Google acquired Skybox, a satellite-imaging program in 2014, it ended some of Skybox’s military contracts, and its robotics teams have not entered into competitions run by the Pentagon, in contrast to many other companies with similar (or even lesser) capabilities. Given its previous stance, Google’s choice to work in such an unregulated field at the intersection of developing technologies – both drones and artificial intelligence – seems like a change of course.

Artificial intelligence poses significant challenges outside of a warzone, let alone in combination with weapons. It could remove accountability for operations, which could make the death of civilians no more than a computer error. The US government’s deployment of drones has been incredibly flawed, but this is unlikely to change with simply more accurate identification of “objects”. And if those “objects” are terrorists, that creates a huge range of other issues, such as what data you train it on or how you even classify a terrorist, as detailed in this Ars Technica article.

Given what’s at stake, Google’s involvement in Project Maven – and by extension, in the military industrial complex – adds a particularly thorny extra dimension to those debates raging about what role technology and the firms that create it play in our society.

This feature originally appeared in NewStatesman.