Last year was full of cybersecurity disasters, from the revelation of security flaws in billions of microchips to massive data breaches and attacks using malicious software that locks down computer systems until a ransom is paid, usually in the form of an untraceable digital currency.

We’re going to see more mega-breaches and ransomware attacks in 2019. Planning to deal with these and other established risks, like threats to web-connected consumer devices and critical infrastructure such as electrical grids and transport systems, will be a top priority for security teams. But cyber-defenders should be paying attention to new threats, too. Here are some that should be on watch lists:

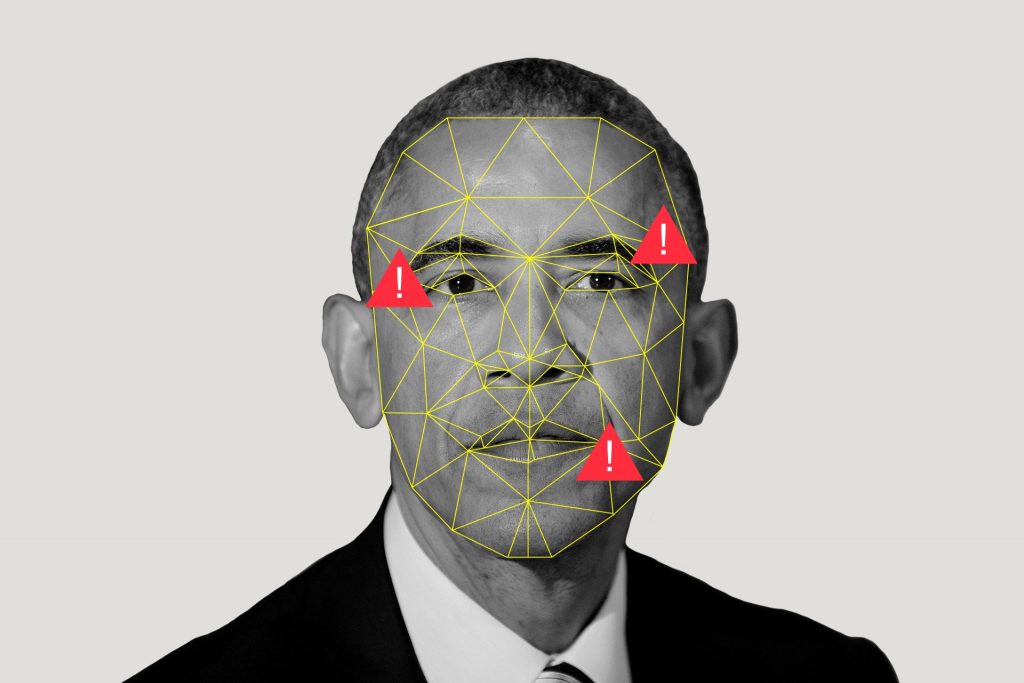

Exploiting AI-generated fake video and audio

Thanks to advances in artificial intelligence, it’s now possible to create fake video and audio messages that are incredibly difficult to distinguish from the real thing. These “deepfakes” could be a boon to hackers in a couple of ways. AI-generated “phishing” e-mails that aim to trick people into handing over passwords and other sensitive data have already been shown to be more effective than ones generated by humans. Now hackers will be able to throw highly realistic fake video and audio into the mix, either to reinforce instructions in a phishing e-mail or as a standalone tactic.

From our partners:

Cybercriminals could also use the technology to manipulate stock prices by, say, posting a fake video of a CEO announcing that a company is facing a financing problem or some other crisis. There’s also the danger that deepfakes could be used to spread false news in elections and to stoke geopolitical tensions.

Such ploys would once have required the resources of a big movie studio, but now they can be pulled off by anyone with a decent computer and a powerful graphics card. Startups are developing technology to detect deepfakes, but it’s unclear how effective their efforts will be. In the meantime, the only real line of defense is security awareness training to sensitize people to the risk.

Poisoning AI defenses

Security companies have rushed to embrace AI models as a way to help anticipate and detect cyberattacks. However, sophisticated hackers could try to corrupt these defenses. “AI can help us parse signals from noise,” says Nate Fick, CEO of the security firm Endgame, but “in the hands of the wrong people,” it’s also AI that’s going to generate the most sophisticated attacks.

Generative adversarial networks, or GANs, which pitch two neural networks against one another, can be used to try to guess what algorithms defenders are using in their AI models. Another risk is that hackers will target data sets used to train models and poison them—for instance, by switching labels on samples of malicious code to indicate that they are safe rather than suspect.

Hacking smart contracts

Smart contracts are software programs stored on a blockchain that automatically execute some form of digital asset exchange if conditions encoded in them are met. Entrepreneurs are pitching their use for everything from money transfers to intellectual-property protection. But it’s still early in their development, and researchers are finding bugs in some of them. So are hackers, who have exploited flaws to steal millions of dollars’ worth of cryptocurrencies.

The fundamental issue is that blockchains were designed to be transparent. Keeping data associated with smart contracts private is therefore a challenge. “We need to build privacy-preserving technologies into [smart contract] platforms,” says Dawn Song, a professor at the University of California, Berkeley, and the CEO of Oasis Labs, a startup that’s working on ways to do this using special hardware.

Breaking encryption using quantum computers

Security experts predict that quantum computers, which harness exotic phenomena from quantum physics to produce exponential leaps in processing power, could crack encryption that currently helps protect everything from e-commerce transactions to health records.

Quantum machines are still in their infancy, and it could be some years before they pose a serious threat. But products like cars whose software can be updated remotely will still be in use a decade or more from now. The encryption baked into them today could ultimately become vulnerable to quantum attack. The same holds true for code used to protect sensitive data, like financial records, that need to be stored for many years.

A recent report from a group of US quantum experts urges organizations to start adopting new and forthcoming kinds of encryption algorithms that can withstand a quantum attack. And government organizations like the US National Institute of Standards and Technology are working on standards for post-quantum cryptography to make this process easier.

Attacking from the computing cloud

Businesses that host other companies’ data on their servers—or manage clients’ IT systems remotely—make super-tempting targets for hackers. By breaching these companies’ systems, they can get access to those of clients, too. Big cloud companies like Amazon and Google can afford to invest heavily in cybersecurity defenses and pay salaries that attract some of the best talent in the field. That doesn’t make them immune to a breach, but it’s more likely that hackers will target smaller firms.

This has already started to happen. The US government recently accused Chinese hackers of sneaking into the systems of a company that managed IT for other firms. Using this access, the hackers were allegedly able to gain access to the computers of 45 companies around the world, in industries from aviation to oil and gas exploration.

Dubbed “Cloudhopper” by security experts, the attack is just the tip of what’s going to be a fast-growing iceberg. “You’re going to see [hackers] move from focusing on desktop malware to data-center malware” that offers significant economies of scale, says Chenxi Wang, the founder of Rain Capital, a venture capital firm that specializes in cybersecurity.

Some of the other risks we’ve listed may seem less pressing than this one. But when it comes to cybersecurity, the companies best prepared to tackle tomorrow’s threats will be the ones most willing to exercise their imaginations today.

This article is written by Martin Giles & originally appeared in MIT Technology Review.